Vacuum Tubes and Transistors

What can AI learn from the age of vacuum tubes?

I’ve had a ham radio license since the late 1960s and observed the transition from vacuum tubes (remember them?) to transistors firsthand. Because we’re allowed to operate high-power transmitters (1,500-watt output), tubes hang on in our world a lot longer than elsewhere. There’s a good reason: tubes are ideal high-power devices for people who don’t always know what they’re doing, people who are just smart enough to be dangerous. About the only way you can damage them is by getting them hot enough to melt the internal components. That happens… but it means that there’s a huge margin for error.

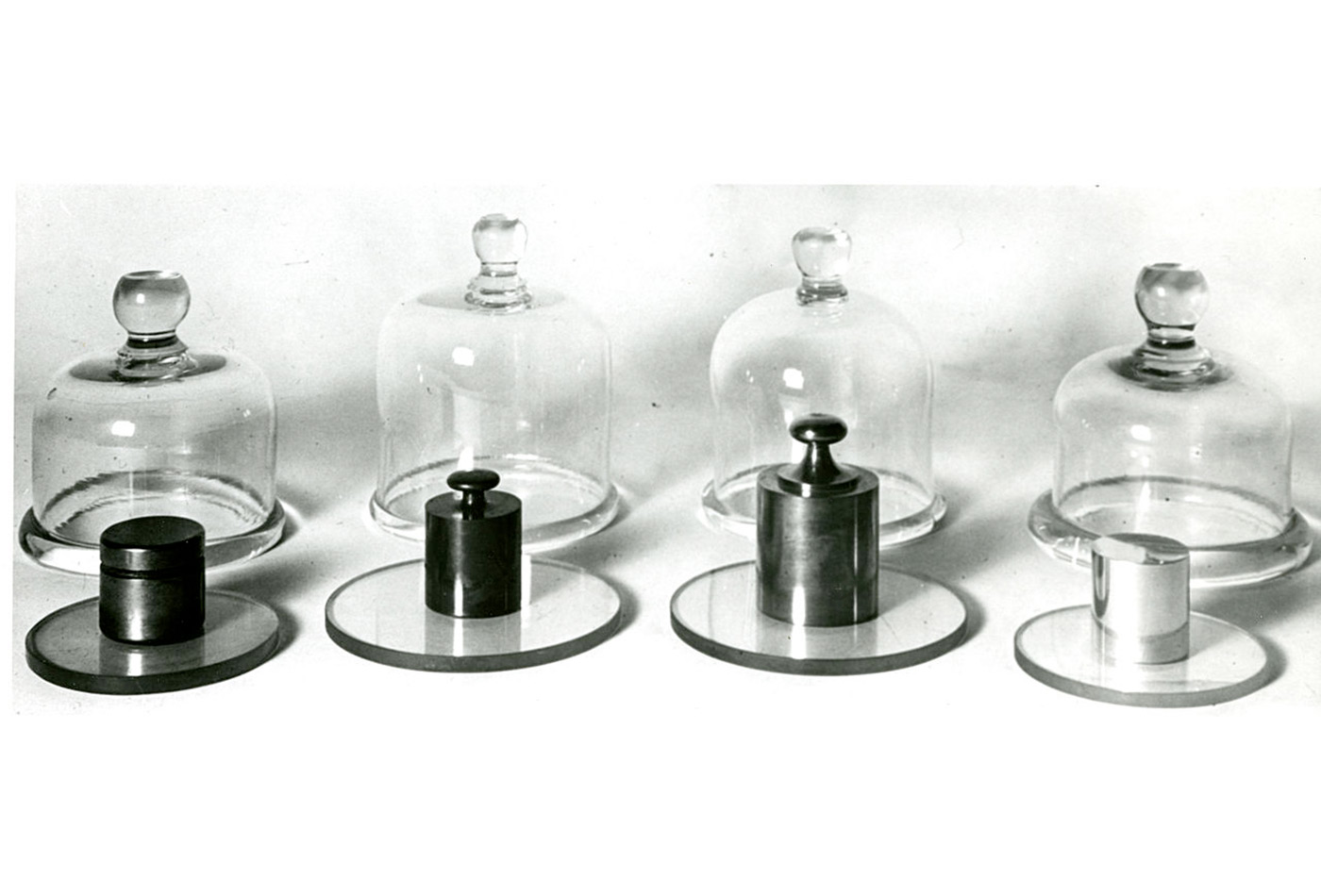

3-1000Z, one of the last large glass bottle vacuum tubes. Capable of 1500W output.

Transistors are the opposite. If a transistor exceeds its specifications for a millionth of a second, it will be destroyed. If tubes are like football players, transistors are like professional dancers: very strong, very powerful, but if they land wrong, there’s a serious sprain. As a result, there’s a big difference between high-power tube equipment and transistor equipment. To cool a vacuum tube, you put a fan next to it. To cool a transistor that’s generating 500 watts of heat from an area the size of a dime, you need a heavy copper spreader, a huge heat sink, and multiple fans. A tube amplifier is a box with a big power supply, a large vacuum tube, and an output circuit. A transistor amplifier has all of that, plus computers, sensors, and lots of other electronics to shut it down if anything looks like it’s going wrong. A lot of adjustments that you used to make by turning knobs have been automated. It’s easy to see the automation as a convenience, but in reality it’s a necessity. If these adjustments weren’t automated, you’d burn out the transistors before you get on the air.

Software has been making a similar transition. The early days of the web were simple: HTML, some minimal JavaScript, CSS, and CGI. Applications have obviously been getting more complex; backends with databases, middleware, and complex frontend frameworks have all become part of our world. Attacks against applications of all kinds have grown more common and more serious. Observability is the first step in a “transistor-like” approach to building software. It’s important to make sure that you can capture enough relevant data to predict problems before they become problems; only capturing enough data for a postmortem analysis isn’t sufficient.

Although we’re moving in the right direction, with AI the stakes are higher. This year, we’ll see AI incorporated into applications of all kinds. AI introduces many new problems that developers and IT staff will need to deal with. Here’s a start at a list:

- Security issues: Whether they do it maliciously or just for lols, people will want to make your AI act incorrectly. You can expect racist, misogynist, and just plain false output. And you will find that these are business issues.

- More security issues: Whether by “accident” or in response to a malicious prompt, we’ve seen that AI systems can leak users’ data to other parties.

- Even more security issues: Language models are frequently used to generate source code for computer programs. That code is frequently insecure. It’s even possible that attackers could force a model to generate insecure code on their command.

- Freshness: Models grow “stale” eventually and need to be retrained. There’s no evidence that large language models are an exception. Languages change slowly, but the topics about which you want your model to be conversant will not.

- Copyright: While these issues are only starting to work their way through the courts, developers of AI applications will almost certainly have some liability for copyright violation.

- Other liability: We’re only beginning to see legislation around privacy and transparency; Europe is the clear leader here. Whether or not the US ever has effective laws regulating the use of AI, companies need to comply with international law.

That’s only a start. My point isn’t to enumerate everything that can go wrong but that complexity is growing in ways that makes in-person monitoring impossible. This is something the financial industry learned a long time ago (and continues to learn). Algorithmic trading systems need to monitor themselves constantly and alert humans to intervene at the first sign something is wrong; they must have automatic “circuit breakers” to shut the application down if errors persist; and it must be possible to shut them down manually if these other methods fail. Without these safeguards, the result might look like Knight Capital, a company whose algorithmic trading software made $440M worth of mistakes on its first day.

The problem is that the AI industry hasn’t yet learned from the experience of others; it’s still moving fast and breaking things at the same time that it’s making the transition from relatively simple software (and yes, I consider a big React-based frontend with an enterprise backend “relatively simple” compared to LLM-based applications) to software that entangles many more processing nodes, software whose workings we don’t fully understand, and software that can cause damage at scale. And, like a modern high-power transistor amplifier, this software is too complex and fragile to be managed by hand. It’s still not clear that we know how to build the automation that we need to manage AI applications. Learning how to build those automation systems must become a priority for the next few years.